scrapy中的request

- 作者: 五速梦信息网

- 时间: 2026年04月04日 13:28

scrapy中的request

初始化参数

class scrapy.http.Request(

url [ ,

callback,

method=‘GET’,

headers,

body,

cookies,

meta,

encoding=‘utf-8’,

priority=0,

don‘t_filter=False,

errback ] )

1,生成Request的方法

def parse_page1(self, response):

return scrapy.Request("http://www.example.com/some_page.html",<br/>

callback=self.parse_page2)

def parse_page2(self, response):

# this would log http://www.example.com/some_page.html<br/>

self.logger.info("Visited %s", response.url)

2,通过Request传递数据的方法

def parse_page1(self, response):

item = MyItem()<br/>

item['main_url'] = response.url<br/>

request = scrapy.Request("http://www.example.com/some_page.html",<br/>

callback=self.parse_page2)<br/>

request.meta['item'] = item<br/>

yield request

def parse_page2(self, response):

item = response.meta['item']<br/>

item['other_url'] = response.url<br/>

yield item

3,Request.meta中的特殊关键字

4,主要子类FormRequest,用于登陆

return [FormRequest(url=“http://www.example.com/post/action",

formdata={'name': 'John Doe', 'age': ''},<br/>

callback=self.after_post)]

更相信的登陆的例子

import scrapy

class LoginSpider(scrapy.Spider):

name = 'example.com'<br/>

start_urls = ['http://www.example.com/users/login.php']

def parse(self, response):

return scrapy.FormRequest.from_response(<br/>

response,<br/>

formdata={'username': 'john', 'password': 'secret'},<br/>

callback=self.after_login<br/>

)

def after_login(self, response):

# check login succeed before going on<br/>

if "authentication failed" in response.body:<br/>

self.logger.error("Login failed")<br/>

return

continue scraping with authenticated session…

- 上一篇: Scrapy中的Request和Response

- 下一篇: Scrapy中的crawlspider

相关文章

-

Scrapy中的Request和Response

Scrapy中的Request和Response

- 互联网

- 2026年04月04日

-

Scrapy中的Request和日志分析

Scrapy中的Request和日志分析

- 互联网

- 2026年04月04日

-

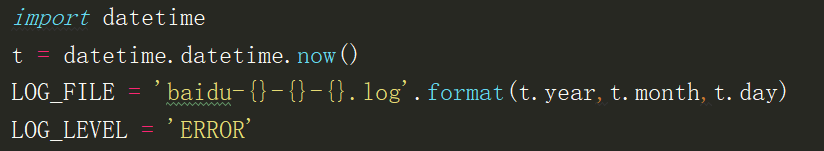

Scrapy中的反反爬、logging设置、Request参数及POST请求

Scrapy中的反反爬、logging设置、Request参数及POST请求

- 互联网

- 2026年04月04日

-

Scrapy中的crawlspider

Scrapy中的crawlspider

- 互联网

- 2026年04月04日

-

scrapy中Request中常用参数

scrapy中Request中常用参数

- 互联网

- 2026年04月04日

-

scrapy之Request对象

scrapy之Request对象

- 互联网

- 2026年04月04日