Scrapy中的Request和Response

- 作者: 五速梦信息网

- 时间: 2026年04月04日 13:28

Request

Request 部分源码:

# 部分代码

class Request(object_ref): def init(self, url, callback=None, method=‘GET’, headers=None, body=None,

cookies=None, meta=None, encoding='utf-8', priority=0,<br/> dont_filter=False, errback=None):self._encoding = encoding # this one has to be set first

self.method = str(method).upper()<br/> self._set_url(url)<br/> self._set_body(body)<br/> assert isinstance(priority, int), "Request priority not an integer: %r" % priority<br/> self.priority = priorityassert callback or not errback, “Cannot use errback without a callback”

self.callback = callback<br/> self.errback = errbackself.cookies = cookies or {}

self.headers = Headers(headers or {}, encoding=encoding)<br/> self.dont_filter = dont_filterself._meta = dict(meta) if meta else None @property

def meta(self):<br/> if self._meta is None:<br/> self._meta = {}<br/> return self._meta</pre>其中,比较常用的参数:

url: 就是需要请求,并进行下一步处理的url callback: 指定该请求返回的Response,由那个函数来处理。 method: 请求一般不需要指定,默认GET方法,可设置为“GET”, “POST”, “PUT”等,且保证字符串大写 headers: 请求时,包含的头文件。一般不需要。内容一般如下:

</code></pre>

Host: media.readthedocs.org<br/> User-Agent: Mozilla/5.0 (Windows NT 6.2; WOW64; rv:33.0) Gecko/20100101 Firefox/33.0<br/> Accept: text/css,*/*;q=0.1<br/> Accept-Language: zh-cn,zh;q=0.8,en-us;q=0.5,en;q=0.3<br/> Accept-Encoding: gzip, deflate<br/> Referer: http://scrapy-chs.readthedocs.org/zh_CN/0.24/<br/> Cookie: _ga=GA1.2.1612165614.1415584110;<br/> Connection: keep-alive<br/> If-Modified-Since: Mon, 25 Aug 2014 21:59:35 GMT<br/> Cache-Control: max-age=0</pre>meta: 比较常用,在不同的请求之间传递数据使用的。字典dict型request_with_cookies = Request(

url="http://www.example.com",<br/> cookies={'currency': 'USD', 'country': 'UY'},<br/> meta={'dont_merge_cookies': True}<br/> )</pre>Responseencoding: 使用默认的 ‘utf-8’ 就行。

dont_filter: 表明该请求不由调度器过滤。这是当你想使用多次执行相同的请求,忽略重复的过滤器。默认为False。

errback: 指定错误处理函数

# 部分代码

class Response(object_ref):

def __init__(self, url, status=200, headers=None, body='', flags=None, request=None):<br/> self.headers = Headers(headers or {})<br/> self.status = int(status)<br/> self._set_body(body)<br/> self._set_url(url)<br/> self.request = request<br/> self.flags = [] if flags is None else list(flags)@property

def meta(self):<br/> try:<br/> return self.request.meta<br/> except AttributeError:<br/> raise AttributeError("Response.meta not available, this response " \<br/> "is not tied to any request")</pre>大部分参数和上面的差不多:

status: 响应码

_set_body(body): 响应体

_set_url(url):响应url

self.request = request发送POST请求

yield scrapy.FormRequest(url, formdata, callback)start_requests(self)class mySpider(scrapy.Spider):

# start_urls = ["http://www.example.com/"]def start_requests(self):

url = 'http://www.renren.com/PLogin.do'FormRequest 是Scrapy发送POST请求的方法

yield scrapy.FormRequest(<br/> url = url,<br/> formdata = {"email" : "loaderman@163.com", "password" : "loaderman"},<br/> callback = self.parse_page<br/> )<br/> def parse_page(self, response):<br/> # do something</pre>模拟登陆

使用FormRequest.from_response()方法模拟用户登录

通常网站通过 实现对某些表单字段(如数据或是登录界面中的认证令牌等)的预填充。

使用Scrapy抓取网页时,如果想要预填充或重写像用户名、用户密码这些表单字段, 可以使用 FormRequest.from_response() 方法实现。

下面是使用这种方法的爬虫例子:

import scrapy class LoginSpider(scrapy.Spider):

name = 'example.com'<br/> start_urls = ['http://www.example.com/users/login.php']def parse(self, response):

return scrapy.FormRequest.from_response(<br/> response,<br/> formdata={'username': 'john', 'password': 'secret'},<br/> callback=self.after_login<br/> )def after_login(self, response):

# check login succeed before going on<br/> if "authentication failed" in response.body:<br/> self.log("Login failed", level=log.ERROR)<br/> returncontinue scraping with authenticated session…

知乎爬虫案例参考:

zhihuSpider.py爬虫代码

# -- coding:utf-8 --

from scrapy.spiders import CrawlSpider, Rule

from scrapy.selector import Selector

from scrapy.linkextractors import LinkExtractor

from scrapy import Request, FormRequest

from zhihu.items import ZhihuItem class ZhihuSipder(CrawlSpider) :

name = "zhihu"<br/> allowed_domains = ["www.zhihu.com"]<br/> start_urls = [<br/> "http://www.zhihu.com"<br/> ]<br/> rules = (<br/> Rule(LinkExtractor(allow = ('/question/\d+#.*?', )), callback = 'parse_page', follow = True),<br/> Rule(LinkExtractor(allow = ('/question/\d+', )), callback = 'parse_page', follow = True),<br/> )headers = {

"Accept": "*/*",<br/> "Accept-Encoding": "gzip,deflate",<br/> "Accept-Language": "en-US,en;q=0.8,zh-TW;q=0.6,zh;q=0.4",<br/> "Connection": "keep-alive",<br/> "Content-Type":" application/x-www-form-urlencoded; charset=UTF-8",<br/> "User-Agent": "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_10_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/38.0.2125.111 Safari/537.36",<br/> "Referer": "http://www.zhihu.com/"<br/> }#重写了爬虫类的方法, 实现了自定义请求, 运行成功后会调用callback回调函数

def start_requests(self):<br/> return [Request("https://www.zhihu.com/login", meta = {'cookiejar' : 1}, callback = self.post_login)]def post_login(self, response):

print 'Preparing login'<br/> #下面这句话用于抓取请求网页后返回网页中的_xsrf字段的文字, 用于成功提交表单<br/> xsrf = Selector(response).xpath('//input[@name="_xsrf"]/@value').extract()[0]<br/> print xsrf<br/> #FormRequeset.from_response是Scrapy提供的一个函数, 用于post表单<br/> #登陆成功后, 会调用after_login回调函数<br/> return [FormRequest.from_response(response, #"http://www.zhihu.com/login",<br/> meta = {'cookiejar' : response.meta['cookiejar']},<br/> headers = self.headers, #注意此处的headers<br/> formdata = {<br/> '_xsrf': xsrf,<br/> 'email': '1095511864@qq.com',<br/> 'password': ''<br/> },<br/> callback = self.after_login,<br/> dont_filter = True<br/> )]def after_login(self, response) :

for url in self.start_urls :<br/> yield self.make_requests_from_url(url)def parse_page(self, response):

problem = Selector(response)<br/> item = ZhihuItem()<br/> item['url'] = response.url<br/> item['name'] = problem.xpath('//span[@class="name"]/text()').extract()<br/> print item['name']<br/> item['title'] = problem.xpath('//h2[@class="zm-item-title zm-editable-content"]/text()').extract()<br/> item['description'] = problem.xpath('//div[@class="zm-editable-content"]/text()').extract()<br/> item['answer']= problem.xpath('//div[@class=" zm-editable-content clearfix"]/text()').extract()<br/> return item</pre>Item类设置

from scrapy.item import Item, Field class ZhihuItem(Item):

# define the fields for your item here like:<br/> # name = scrapy.Field()<br/> url = Field() #保存抓取问题的url<br/> title = Field() #抓取问题的标题<br/> description = Field() #抓取问题的描述<br/> answer = Field() #抓取问题的答案<br/> name = Field() #个人用户的名称</pre>setting.py 设置抓取间隔

BOT_NAME = ‘zhihu’ SPIDER_MODULES = [‘zhihu.spiders’]

NEWSPIDER_MODULE = ‘zhihu.spiders’

DOWNLOAD_DELAY = 0.25 #设置下载间隔为250ms

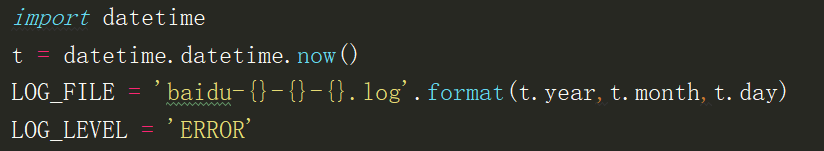

- 上一篇: Scrapy中的Request和日志分析

- 下一篇: scrapy中的request

相关文章

-

Scrapy中的Request和日志分析

Scrapy中的Request和日志分析

- 互联网

- 2026年04月04日

-

Scrapy中的反反爬、logging设置、Request参数及POST请求

Scrapy中的反反爬、logging设置、Request参数及POST请求

- 互联网

- 2026年04月04日

-

scrapy中的下载器中间件

scrapy中的下载器中间件

- 互联网

- 2026年04月04日

-

scrapy中的request

scrapy中的request

- 互联网

- 2026年04月04日

-

Scrapy中的crawlspider

Scrapy中的crawlspider

- 互联网

- 2026年04月04日

-

scrapy中Request中常用参数

scrapy中Request中常用参数

- 互联网

- 2026年04月04日