[PyTorch入门]之迁移学习

- 作者: 五速梦信息网

- 时间: 2026年05月04日 13:59

迁移学习教程

来自这里。

在实践中,很少有人从头开始训练整个卷积网络(使用随机初始化),因为拥有足够大小的数据集相对较少。相反,通常在非常大的数据集(例如ImageNet,它包含120万幅、1000个类别的图像)上对ConvNet进行预训练,然后使用ConvNet作为初始化或固定的特征提取器来执行感兴趣的任务。

两个主要的迁移学习的场景如下:

- Finetuning the convert:与随机初始化不同,我们使用一个预训练的网络初始化网络,就像在imagenet 1000 dataset上训练的网络一样。其余的训练看起来和往常一样。

- ConvNet as fixed feature extractor:在这里,我们将冻结所有网络的权重,除了最后的全连接层。最后一个全连接层被替换为一个具有随机权重的新层,并且只训练这一层。

#!/usr/bin/env python3

License: BSD

Author: Sasank Chilamkurthy

from future import print_function,division

import torch

import torch.nn as nn

import torch.optim as optim

import numpy as np

import torchvision

from torchvision import datasets,models,transforms

import matplotlib.pyplot as plt

import time

import os

import copy

plt.ion() # 交互模式

导入数据

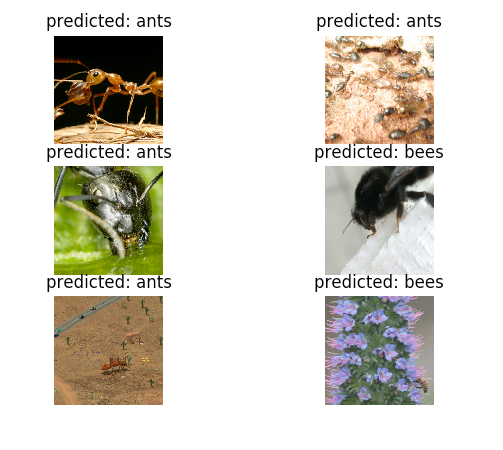

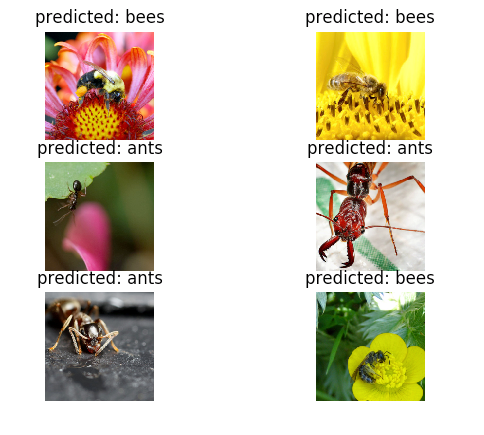

torchvisiontorch.utils.data我们今天要解决的问题是训练一个模型来区分蚂蚁和蜜蜂。我们有蚂蚁和蜜蜂的训练图像各120张。每一类有75张验证图片。通常,如果是从零开始训练,这是一个非常小的数据集。因为我们要使用迁移学习,所以我们的例子应该具有很好地代表性。

这个数据集是一个非常小的图像子集。

你可以从这里下载数据并解压到当前目录。

# 训练数据的扩充及标准化

只进行标准化验证

data_transforms = {

'train': transforms.Compose([<br/>

transforms.RandomResizedCrop(224),<br/>

transforms.RandomHorizontalFlip(),<br/>

transforms.ToTensor(),<br/>

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])<br/>

]),<br/>

'val': transforms.Compose([<br/>

transforms.Resize(256),<br/>

transforms.CenterCrop(224),<br/>

transforms.ToTensor(),<br/>

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])<br/>

])<br/>

}

data_dir = ‘data/hymenoptera_data’

image_datasets = {x: datasets.ImageFolder(os.path.join(

data_dir, x), data_transforms[x]) for x in ['train', 'val']}<br/>

dataloaders = {x: torch.utils.data.DataLoader(

image_datasets[x], batch_size=4, shuffle=True, num_workers=4) for x in ['train', 'val']}

dataset_size = {x:len(image_datasets[x]) for x in [‘train’,‘val’]}

class_name = image_datasets[‘train’].classes

device = torch.device(‘cuda:0’ if torch.cuda.is_available() else ‘cpu’)

可视化一些图像

为了理解数据扩充,我们可视化一些训练图像。

def imshow(inp, title=None):

inp = inp.numpy().transpose((1, 2, 0))<br/>

mean = np.array([0.485, 0.456, 0.406])<br/>

std = np.array([0.229, 0.224, 0.225])<br/>

inp = std * inp + mean<br/>

inp = np.clip(inp, 0, 1)<br/>

plt.imshow(inp)<br/>

if title is not None:<br/>

plt.title(title)<br/>

plt.pause(10) # 暂停一会,以便更新绘图

获取一批训练数据

inputs, classes = next(iter(dataloaders[‘train’]))

从批处理中生成网格

out = torchvision.utils.make_grid(inputs)

imshow(out, title=[class_name[x] for x in classes])

训练模型

现在我们来实现一个通用函数来训练一个模型。在这个函数中,我们将:

- 调整学习率

- 保存最优模型

scheduletorch.optim.lr_schedulerdef train_model(model, criterion, optimizer, schduler, num_epochs=25):

since = time.time()

best_model_wts = copy.deepcopy(model.state_dict())

best_acc = 0.0

for epoch in range(num_epochs):

print('Epoch {}/{}'.format(epoch, num_epochs-1))<br/>

print('-'*10)

for phase in [‘train’, ‘val’]:

if phase == 'train':<br/>

schduler.step()<br/>

model.train() # 训练模型<br/>

else:<br/>

model.eval() # 评估模型

running_loss = 0.0

running_corrects = 0

for inputs, labels in dataloaders[phase]:

inputs = inputs.to(device)<br/>

labels = labels.to(device)

零化参数梯度

optimizer.zero_grad()

前向传递

# 如果只是训练的话,追踪历史<br/>

with torch.set_grad_enabled(phase == 'train'):<br/>

outputs = model(inputs)<br/>

_, preds = torch.max(outputs, 1)<br/>

loss = criterion(outputs, labels)

训练时,反向传播 + 优化

if phase == 'train':<br/>

loss.backward()<br/>

optimizer.step()

统计

running_loss += loss.item() * inputs.size(0)<br/>

running_corrects += torch.sum(preds == labels.data)

epoch_loss = running_loss / dataset_size[phase]

epoch_acc = running_corrects.double() / dataset_size[phase]

print(‘{} Loss: {:.4f} Acc: {:.4f}’.format(

phase, epoch_loss, epoch_acc))

很拷贝模型

if phase == 'val' and epoch_acc > best_acc:<br/>

best_acc = epoch_acc<br/>

best_model_wts = copy.deepcopy(model.state_dict())

print()

time_elapsed = time.time() - since

print('Training complete in {:.0f}m {:.0f}s'.format(<br/>

time_elapsed // 60, time_elapsed % 60))<br/>

print('Best val Acc: {:4f}'.format(best_acc))

导入最优模型权重

model.load_state_dict(best_model_wts)<br/>

return model<br/>

可视化模型预测

展示部分预测图像的通用函数:

def visualize_model(model, num_images=6):

was_training = model.training<br/>

model.eval()<br/>

images_so_far = 0<br/>

fig = plt.figure()

with torch.no_grad():

for i, (inputs, labels) in enumerate(dataloaders['val']):<br/>

inputs = inputs.to(device)<br/>

labels = labels.to(device)

outputs = model(inputs)

_, preds = torch.max(outputs, 1)

for j in range(inputs.size()[0]):

images_so_far += 1<br/>

ax = plt.subplot(num_images//2, 2, images_so_far)<br/>

ax.axis('off')<br/>

ax.set_title('predicted: {}'.format(class_name[preds[j]]))<br/>

imshow(inputs.cpu().data[j])

if images_so_far == num_images:

model.train(mode=was_training)<br/>

return

model.train(mode=was_training)

Finetuning the convnet

加载预处理的模型和重置最后的全连接层:

model_ft = models.resnet18(pretrained=True)

num_ftrs = model_ft.fc.in_features

model_ft.fc = nn.Linear(num_ftrs, 2)

model_ft = model_ft.to(device)

criterion = nn.CrossEntropyLoss()

优化所有参数

optimizer_ft = optim.SGD(model_ft.parameters(), lr=0.001, momentum=0.9)

没7次,学习率衰减0.1

exp_lr_scheduler = torch.optim.lr_scheduler.StepLR(

optimizer_ft, step_size=7, gamma=0.1)<br/>

训练和评估

在CPU上可能会花费15-25分钟,但是在GPU上,少于1分钟。

model_ft = train_model(model_ft, criterion, optimizer_ft,

exp_lr_scheduler, num_epochs=25)<br/>

visualize_model(model_ft)

ConvNet作为固定特征提取器

requires_grad=Falsebackward()model_conv = models.resnet18(pretrained=True)

for param in model_conv.parameters():

param.requires_grad = False

新构造模块的参数默认requires_grad=True

num_ftrs = model_conv.fc.in_features

model_conv.fc = nn.Linear(num_ftrs, 2)

model_conv = model_conv.to(device)

criterion = nn.CrossEntropyLoss()

优化所有参数

optimizer_ft = optim.SGD(model_conv.parameters(), lr=0.001, momentum=0.9)

没7次,学习率衰减0.1

exp_lr_scheduler = torch.optim.lr_scheduler.StepLR(

optimizer_ft, step_size=7, gamma=0.1)

model_conv = train_model(model_conv, criterion, optimizer_ft,

exp_lr_scheduler, num_epochs=25)<br/>

visualize_model(model_conv)

plt.ioff()

plt.show()

相关文章

-

![[Search Engine] 搜索引擎技术之查询处理](http://img.my.csdn.net/uploads/201209/14/1347599934_8638.jpg)

[Search Engine] 搜索引擎技术之查询处理

[Search Engine] 搜索引擎技术之查询处理

- 互联网

- 2026年05月04日

-

[Shell] Shell 中的算术

[Shell] Shell 中的算术

- 互联网

- 2026年05月04日

-

![[svn]查看,删除svn账号](https://img2018.cnblogs.com/blog/840179/201904/840179-20190410103848955-49262830.png)

[svn]查看,删除svn账号

[svn]查看,删除svn账号

- 互联网

- 2026年05月04日

-

[Python爬虫] scrapy爬虫系列 《一》.安装及入门介绍

[Python爬虫] scrapy爬虫系列 《一》.安装及入门介绍

- 互联网

- 2026年05月04日

-

![[Python]Conda 介绍及常用命令](https://images2018.cnblogs.com/blog/759200/201804/759200-20180423223627180-252301114.png)

[Python]Conda 介绍及常用命令

[Python]Conda 介绍及常用命令

- 互联网

- 2026年05月04日

-

[PHP] 简单多进程并发

[PHP] 简单多进程并发

- 互联网

- 2026年05月04日

![[Python爬虫] scrapy爬虫系列 《一》.安装及入门介绍](http://img.blog.csdn.net/20151023221457744)