Clickhouse集群部署

- 作者: 五速梦信息网

- 时间: 2026年06月03日 13:50

1.集群节点信息

10.12.110.201 ch201

10.12.110.202 ch202

10.12.110.203 ch203

2. 搭建一个zookeeper集群

在这三个节点搭建一个zookeeper集群(如果搭建可以直接忽略这一步),先在一个节点上根据以下配置

2.1. 下载 zookeeper-3.4.12.tar.gz 安装包,放置到上面三台服务器一个目录下(/apps/)

2.2. 进入到/apps/目录下,解压tar包,tar -zxvf zookeeper-3.4.12.tar.gz

2.3. 进入zookeeper的conf目录,拷贝zoo_sample.cfg为zoo.cfg,cp zoo_sample.cfg zoo.cfg 修改zoo.cfg文件:

tickTime=2000

initLimit=10

syncLimit=5

dataDir= /apps/zookeeper-3.4.13/data/zookeeper

dataLogDir= /apps/zookeeper-3.4.13/log/zookeeper

clientPort=2182

autopurge.purgeInterval=0

globalOutstandingLimit=200

server.1=ch201:2888:3888

server.2=ch202:2888:3888

server.3=ch203:2888:3888

2.4. 创建需要的目录

$:mkdir -p /apps/zookeeper-3.4.13/data/zookeeper

$:mkdir -p /apps/zookeeper-3.4.13/log/zookeeper

配置完成后将当前的zookeeper目录scp到其他两个节点

2.5. 设置myid

$:vim /data/zookeeper/myid #ch201为1,ch202为2,ch203为3

2.6. 进入zookeeper的bin目录,启动zookeeper服务,每个节点都需要启动

$: ./zkServer.sh start

2.7. 启动之后查看每个节点的状态

$: ./zkServer status

其中有一个节点是leader,有两个节点是follower,证明zookeeper集群是部署成功的

2.8. 测试zookeeper

$:./zkCli.sh -server ch201:2182

service iptables stopzookeeper3. 在三个节点分别搭建单机版,单机版以一个为例

linux$: cat /proc/version

Linux version 2.6.32-573.el6.x86_64 (mockbuild@c6b9.bsys.dev.centos.org) (gcc version 4.4.7 20120313 (Red Hat 4.4.7-16) (GCC) ) #1 SMP Thu Jul 23 15:44:03 UTC 2015

linuxclickhouse rpmclickhouse-client-19.9.5.36-1.el6.x86_64.rpm

clickhouse-common-static-19.9.5.36-1.el6.x86_64.rpm

clickhouse-server-19.9.5.36-1.el6.x86_64.rpm

clickhouse-server-common-19.9.5.36-1.el6.x86_64.rpm

如果你的linux是下面版本:

Linux version 3.10.0-957.el7.x86_64 (mockbuild@kbuilder.bsys.centos.org) (gcc version 4.8.5 20150623 (Red Hat 4.8.5-36) (GCC) ) #1 SMP Thu Nov 8 23:39:32 UTC 2018

clickhouseclickhouse-client-19.16.3.6-1.el7.x86_64.rpm

clickhouse-common-static-19.16.3.6-1.el7.x86_64.rpm

clickhouse-server-19.16.3.6-1.el7.x86_64.rpm

clickhouse-server-common-19.16.3.6-1.el7.x86_64.rpm

https://repo.yandex.ru/clickhouse/deb/stable/main/rpm/home/clickhouse/pack/6/$:rpm -ivh clickhouse-common-static-19.9.5.36-1.el6.x86_64.rpm

$:rpm -ivh clickhouse-server-common-19.9.5.36-1.el6.x86_64.rpm

$:rpm -ivh clickhouse-server-19.9.5.36-1.el6.x86_64.rpm

$:rpm -ivh clickhouse-client-19.9.5.36-1.el6.x86_64.rpm

rpmclickhouse-serverclickhouse-client$: ll /etc/clickhouse-server/

-rw-r--r-- 1 root root 17642 Nov 15 11:44 config.xml

-rw-r--r-- 1 root root 5609 Jul 21 18:16 users.xml

$: ll /etc/clickhouse-client/

drwxr-xr-x 2 clickhouse clickhouse 4096 Nov 15 10:55 conf.d

-rw-r--r-- 1 clickhouse clickhouse 1568 Jul 21 17:27 config.xml

/etc/clickhouse-server/config.xml只修改数据存放的目录:

<!-- Path to data directory, with trailing slash. -->

<path>/home/clickhouse/data/clickhouse</path>

注意这个配置的目录磁盘空间必须足够大

其他配置可以根据自己的实际情况而定,注意配置端口是否被占用

clickhouse-server$: /etc/init.d/clickhouse-server start

libicu如果没有安装会报以下错误:

Start clickhouse-server service: /usr/bin/clickhouse-extract-from-config: error while loading shared libraries: libicui18n.so.42: cannot open shared object file: No such file or directoryCannot obtain value of path from config file: /etc/clickhouse-server/config.xml

安装libicu方法:

下载libicu的rpm包:

libicu-4.2.1-14.el6.x86_64.rpm

libicu-devel-4.2.1-14.el6.x86_64.rpm

存放在linux的一个目录,在当前目录执行:

$: rpm –ivh libicu-4.2.1-14.el6.x86_64.rpm

$: rpm –ivh libicu-devel-4.2.1-14.el6.x86_64.rpm

注意这个包也必须对应linux版本

安装完毕后启动:

$: /etc/init.d/clickhouse-server start

如果出现以下信息证明成功:

Start clickhouse-server service: Path to data directory in /etc/clickhouse-server/config.xml: /var/lib/clickhouse/

DONE

clickhouse-server$: /etc/init.d/clickhouse-server stop

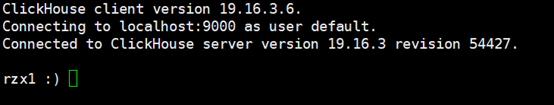

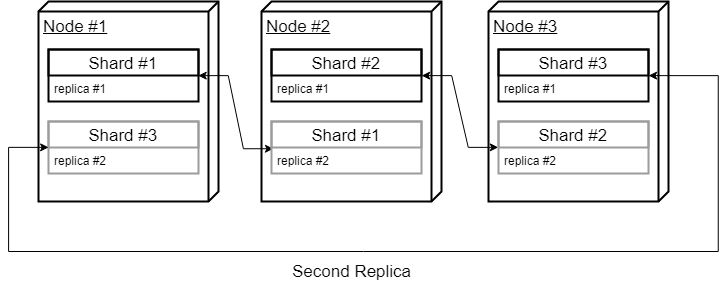

clickhouse-client$: clickhouse-client --host ch201 --port 9000

出现以下:

clickhouse-client localhost 9000证明进入客户端成功

通过查询验证:select 1

证明clickhouse完全安装成功并可以正确使用

4.集群部署

三个节点全部按照上面的指导部署单节点成功后开始配置部署集群需要的配置

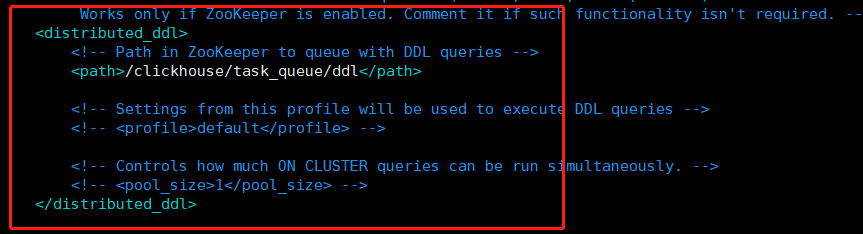

4.1.首先以一个节点为例配置,vim /etc/metrika.xml,添加配置信息如下:

<yandex>

<clickhouse_remote_servers>

<perftest_3shards_1replicas>

<shard>

<internal_replication>true</internal_replication>

<replica>

<host>ch201</host>

<port>9000</port>

</replica>

</shard>

<shard>

<replica>

<internal_replication>true</internal_replication>

<host>ch202</host>

<port>9000</port>

</replica>

</shard>

<shard>

<internal_replication>true</internal_replication>

<replica>

<host>ch203</host>

<port>9000</port>

</replica>

</shard>

</perftest_3shards_1replicas>

</clickhouse_remote_servers>

<!--zookeeper相关配置-->

<zookeeper-servers>

<node index="1">

<host>ch201</host>

<port>2182</port>

</node>

<node index="2">

<host>ch202</host>

<port>2182</port>

</node>

<node index="3">

<host>ch203</host>

<port>2182</port>

</node>

</zookeeper-servers>

<macros>

<replica>ch203</replica>

</macros>

<networks>

<ip>::/0</ip>

</networks>

<clickhouse_compression>

<case>

<min_part_size>10000000000</min_part_size>

<min_part_size_ratio>0.01</min_part_size_ratio>

<method>lz4</method>

</case>

</clickhouse_compression>

</yandex>

<!-- 其中大部分配置一样,以下的配置根据节点的IP/域名具体配置 -->

<macros>

<replica>ch203</replica>

</macros>

vim /etc/metrika.xml<!-- If element has 'incl' attribute, then for it's value will be used corresponding substitution from another file.

By default, path to file with substitutions is /etc/metrika.xml. It could be changed in config in 'include_from' element.

Values for substitutions are specified in /yandex/name_of_substitution elements in that file.

-->

/etc/metrika.xmlscp<macros>

<replica>ch203</replica>

</macros>

对应的IP/域名

5.按照上面的指导配置完成之后,在每个节点都启动clickhouse的服务,和单节点启动一样,当出现无误后,查看clickhouse的log文件,如果出现以下信息,就基本没有问题

6.进一步验证

在每个节点启动clickhouse客户端,和单节点启动完全一样,查询集群信息

select * from system.clusters;

ch201ch202ch203可以看到红框内的信息基本相同,但是也有细微差别,红框外是之前的单节点的信息

至此,clickhouse的集群部署完全成功,生产环境针对数据量可能还需要一些额外的配置

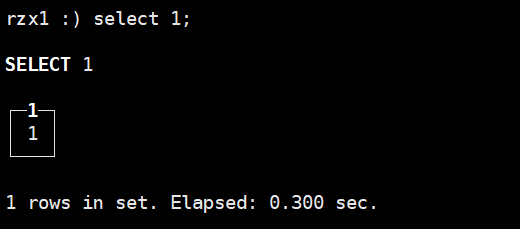

- 上一篇: ClickHouse中的循环复制集群拓扑

- 下一篇: clickhouse分布式集群

相关文章

-

ClickHouse中的循环复制集群拓扑

ClickHouse中的循环复制集群拓扑

- 互联网

- 2026年06月03日

-

CLion 2016.1.1 下载 附注册激活码 破解版方法

CLion 2016.1.1 下载 附注册激活码 破解版方法

- 互联网

- 2026年06月03日

-

CListCtrl 没有焦点的情况下,方向键切换选中行

CListCtrl 没有焦点的情况下,方向键切换选中行

- 互联网

- 2026年06月03日

-

clickhouse分布式集群

clickhouse分布式集群

- 互联网

- 2026年06月03日

-

Clickhouse单机及集群部署详解

Clickhouse单机及集群部署详解

- 互联网

- 2026年06月03日

-

ClickHouse(04)如何搭建ClickHouse集群

ClickHouse(04)如何搭建ClickHouse集群

- 互联网

- 2026年06月03日